AI AGENTS

Building Trust in AI-Assisted Action

Year

2026

Company

Superhuman Go

Product Design

Role

Background

AI actions lacked clarity, control, and trust

AI agents in Superhuman could take actions like sending emails or creating calendar events, but the experience was constrained to a text-based chat interface. I identified a critical gap: users couldn’t confidently verify or adjust what the agent was about to do before execution.

This led to:

Users confirming actions via “yes” or “no” without full visibility

Incomplete previews (e.g. truncated email content)

No way to edit before execution

Frequent re-prompting for small corrections

Result: low trust, high friction, and hesitation to rely on agent-driven actions.

Previous Experience

The system surfaced actions as plain text in chat, rather than structured, interactive UI.

❌ No clear preview of the full action

❌ No ability to edit before execution

❌ Interruptions lacked clarity

❌ Small fixes required restarting

Users weren’t collaborating with the agent, they were correcting it after the fact.

Solution

Making AI actions reviewable, editable, and interruptible

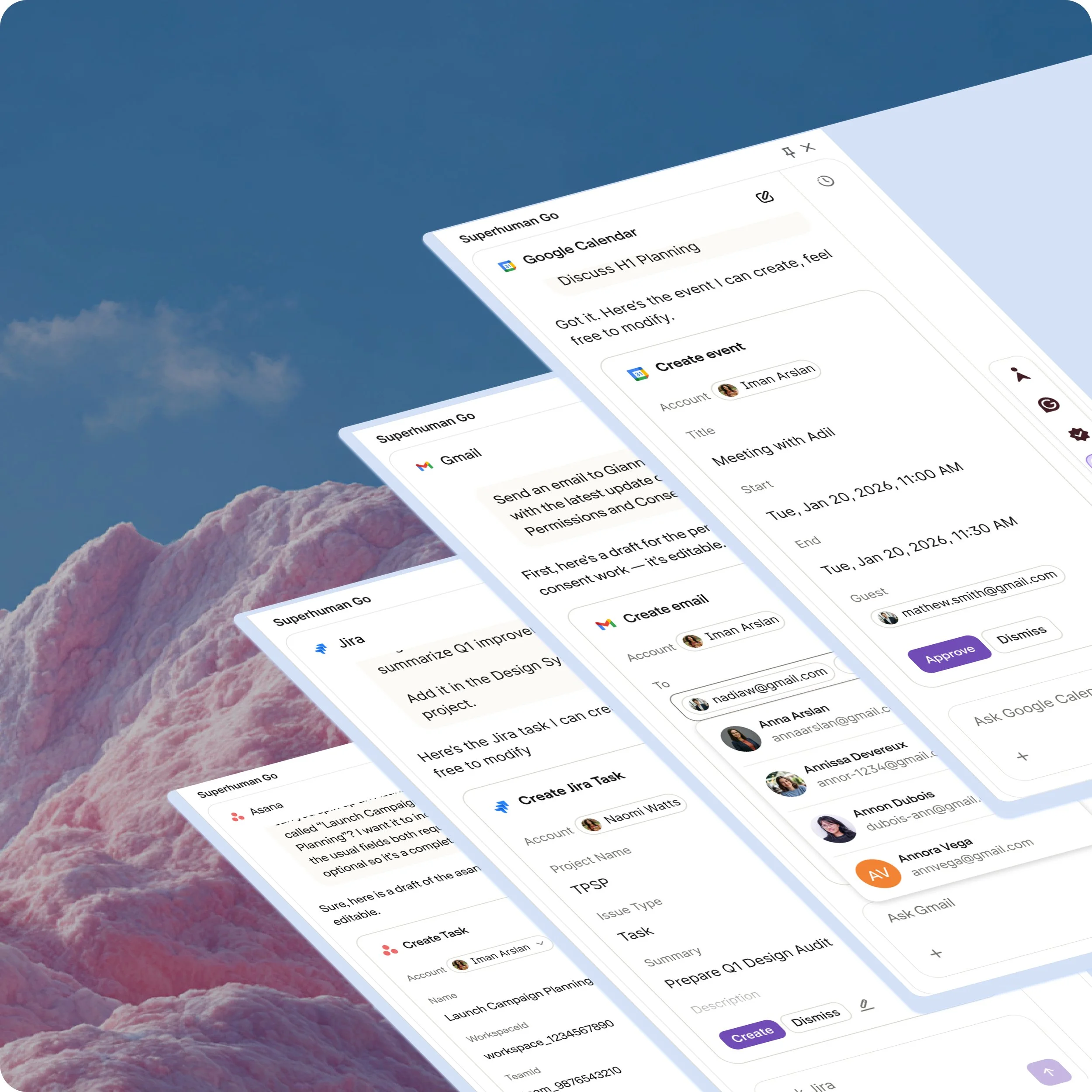

I redesigned how agents request permission by introducing Action Cards — structured, interactive UI embedded directly in chat. This shifted the interaction from passive confirmation → active collaboration.

With this system, users can:

Understand the full action at a glance

Edit parameters inline before committing

Explicitly approve or dismiss

Continue chatting without breaking flow

Outcome: AI actions became something users shape, not just approve.

Built a scalable interaction model across agents

I designed a reusable interaction model for agent-driven actions across 10+ integrations (Gmail, Calendar, Slack, Asana, Jira, Spotify).

Core principles

One pattern, many tools → consistent mental model

Inline control → edit without leaving chat

Structured clarity → replaces ambiguous text

Card system

Active → editable, fully expanded

Accepted / Dismissed → clear outcome

Collapsed → condenses when user moves on

Interaction

All key parameters editable inline

Clear summary before execution

Handles interruption + changing intent

Google Calendar

Create Event → Edit → Invite

Agent proposes event details

User edits time / attendees

User approves → event is created

Jira

Modify Issue → Assign

User requests updates to an existing issue

Agent proposes changes

User reviews and edits inline

User approves → updates are applied

Spotify

An example where Spotify Agent generates a Spotify playlist and presents it as an Action Card for review. Users can edit details inline and approve with confidence, staying in control before anything is created.

Tradeoffs

Balancing flexibility, clarity, and system complexity

I introduced structured UI into a conversational system without breaking flow. I chose inline Action Cards over modals to preserve context, accepting added layout complexity mitigated through hierarchy and collapse behavior.

Actions auto-dismiss on new input to stay aligned with user intent, with predictable regeneration to avoid confusion. I scoped structured UI to action-based tasks to maintain agent flexibility, and prioritized a reusable system across integrations accepting some loss of tool-specific optimization in favor of consistency and scale.

Outcome

Shifting AI from execution to collaboration

Reduced friction in agent workflows by eliminating re-prompts for small fixes

Increased clarity and transparency of pending actions

Improved user trust in AI-assisted execution

Increased action completion on first pass (directional)

Reduced repeated prompts to correct errors

Increased willingness to approve agent-driven actions

Beyond the feature, this work introduced a trust-first design lens to agent interactions.

I helped reframe how the team approached AI actions from optimizing for speed of execution to designing for user confidence, control, and clarity.

This shift influenced how we evaluated future agent capabilities, prioritizing transparency and editability as core requirements rather than enhancements.

Established a scalable interaction pattern across 10+ integrations

Enabled expansion into multi-step and automated workflows

Impact: laid the foundation for evolving AI from a command-based system into a collaborative partner where users remain in control as automation increases.